Microsoft has featured Auquan in its latest Azure OpenAI customer stories, highlighting how our...

.png?height=200&name=Untitled%20design%20(2).png)

OpenAI announced some major changes this week, including the new OpenAI Retrieval tool, which enables ChatGPT to incorporate search engine results (from Bing) — and enhance its output using external data, such as proprietary or domain-specific documents provided by the user.

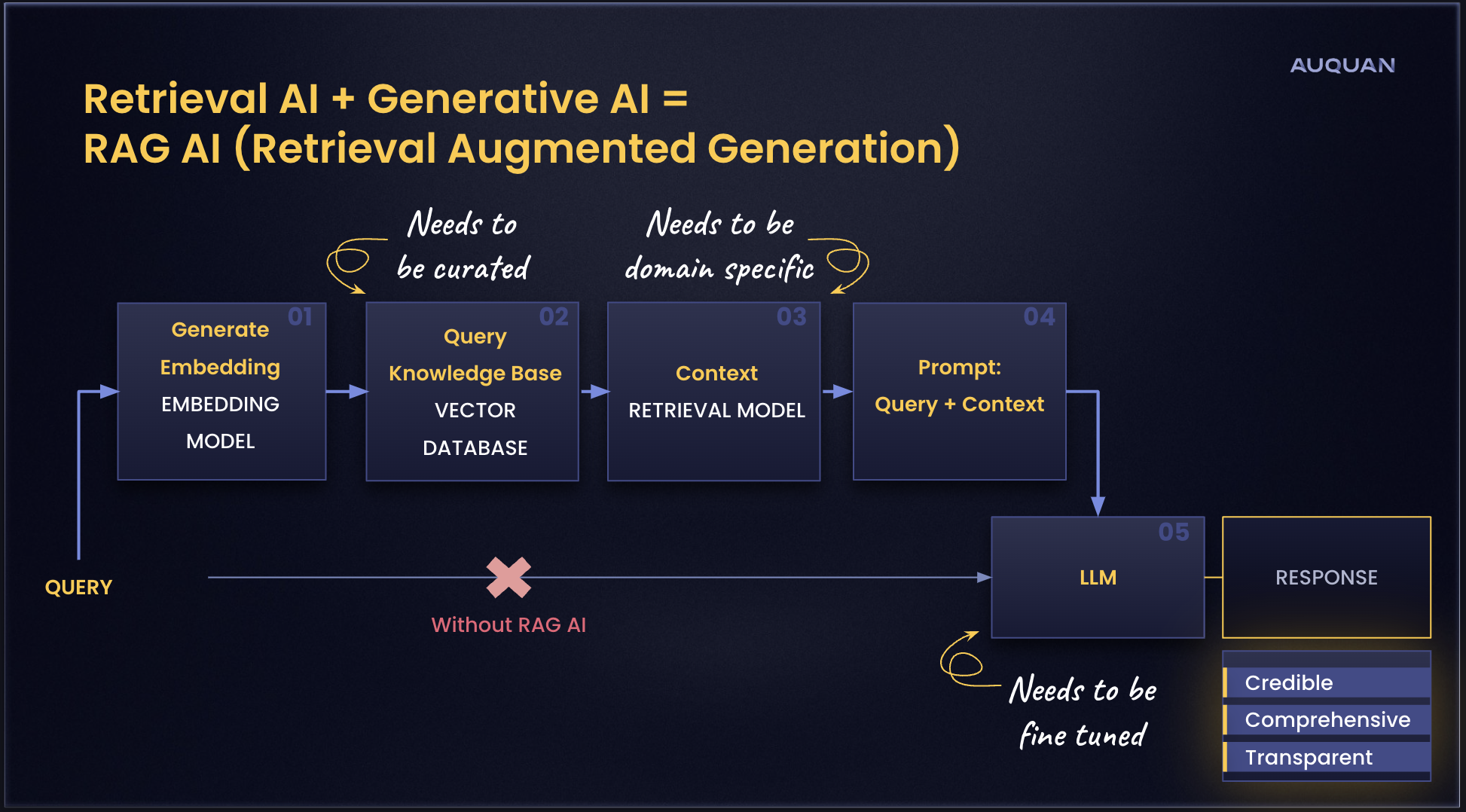

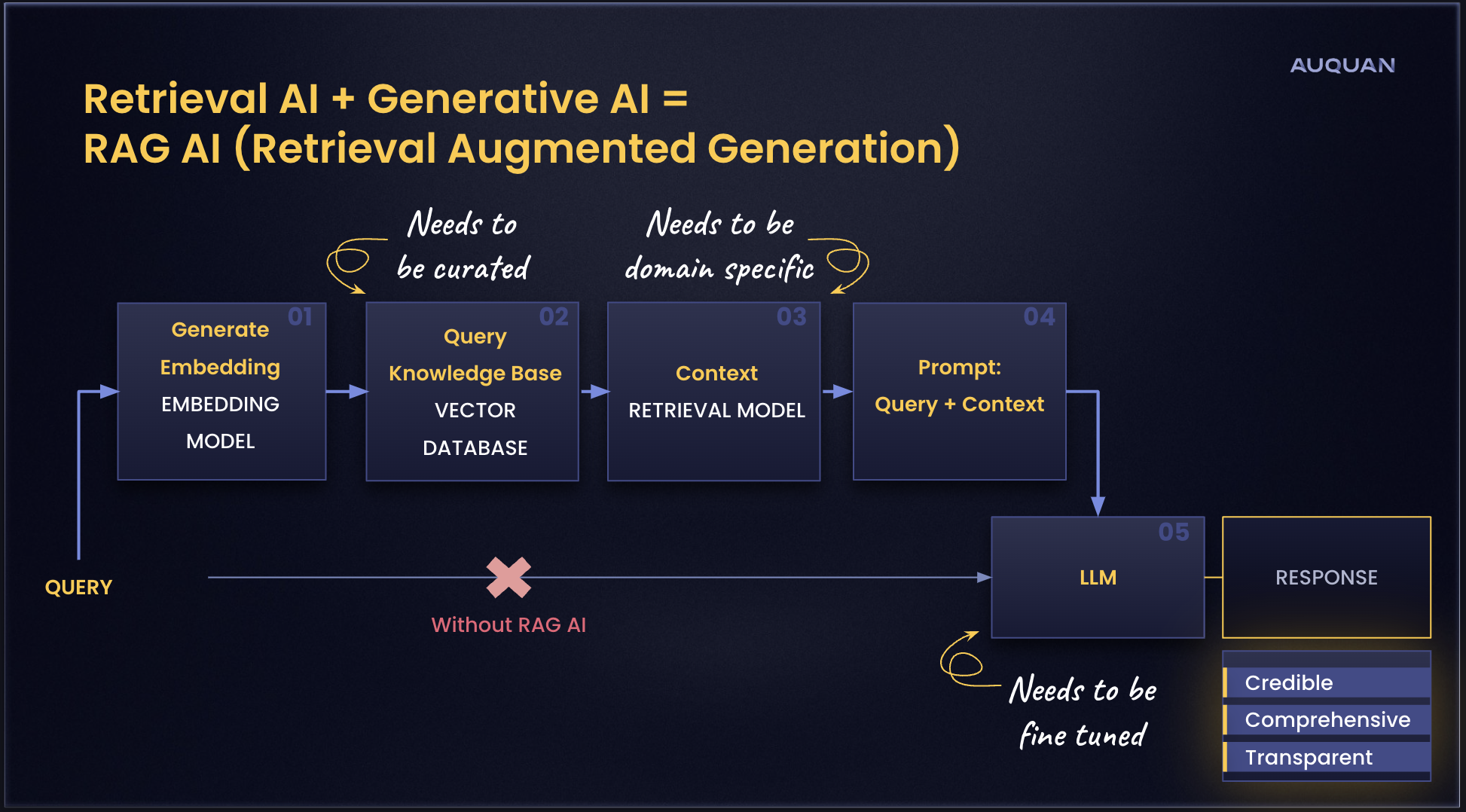

Industry observers have noted that these new capabilities mean OpenAI has adopted retrieval augmented generation (RAG), an AI technique first developed by Meta that combines the power of retrieval-based models that can access real-time information and industry-specific datasets with generative models that are able to produce natural language responses.

OpenAI’s move to RAG makes a lot of sense.

The Large Language Models (LLMs) used by generative AI tools like ChatGPT can’t access domain-specific knowledge or up-to-date information, nor they can’t provide sources for the information they generate. And as we all now know, they tend to fabricate responses — a lot!

These flaws have made generative AI tools unsuitable for a variety of enterprise use cases.

Auquan built its Intelligence Engine on RAG because our financial services customers have knowledge-intensive use cases, such as ESG intelligence, KYC, and company pre-screening. All of these require processing large volumes of unstructured data pulled from domain-specific sources, such as company-produced statements, biodiversity and deforestation data, regulatory and legal documents, supplier information, and local media coverage.

And Auquan’s customers have very high expectations when it comes to the accuracy, credibility, and trustworthiness of the insights and analysis they rely upon, and having RAG under Auquan’s hood makes it possible for us to meet and exceed them.

Based on our experience building our product using RAG, we expect the quality of ChatGPT to improve considerably. But those exploring generative AI for enterprise use cases should recognize that it’s still a general purpose AI tool. And a very useful one at that!

There are some big differences between using a general purpose RAG-based generative AI tool like ChatGPT for enterprise use cases and a domain-specific RAG-based solution like Auquan including:

We knew we made the right call by building on RAG, and the success our customers have achieved with Auquan, combined with our ability to innovate fast, is all of the evidence we need! But it’s nice to see companies like OpenAI make the same decision, and it’s further validation that this is where the industry is moving.

Each day we spotlight under-the-radar investment themes and idiosyncratic risks pulled from our intelligence engine, often involving emerging markets, supply chain issues, ESG risks, and the impact of regulatory changes.

.png?height=200&name=Untitled%20design%20(2).png)

Microsoft has featured Auquan in its latest Azure OpenAI customer stories, highlighting how our...

.jpg?height=200&name=Image%20Cards%20for%20Blog%20Posts%20and%20Social%20Media%20(10).jpg)

We’re delighted to welcome Emily Penell to Auquan as part of our growing Operations team! Based in...

.jpg?height=200&name=Image%20Cards%20for%20Blog%20Posts%20and%20Social%20Media%20(4).jpg)

According to Gartner's report, over 40% of organizations in banking and investment services will...

Each day we spotlight under-the-radar investment themes and idiosyncratic risks pulled from our intelligence engine, often involving emerging markets, supply chain issues, ESG risks, and the impact of regulatory changes.

15 minutes to see what’s possible when manual work disappears.

Interested in working at Auquan? Click here

OpenAI announced some major changes this week, including the new OpenAI Retrieval tool, which enables ChatGPT to incorporate search engine results (from Bing) — and enhance its output using external data, such as proprietary or domain-specific documents provided by the user.

Industry observers have noted that these new capabilities mean OpenAI has adopted retrieval augmented generation (RAG), an AI technique first developed by Meta that combines the power of retrieval-based models that can access real-time information and industry-specific datasets with generative models that are able to produce natural language responses.

OpenAI’s move to RAG makes a lot of sense.

The Large Language Models (LLMs) used by generative AI tools like ChatGPT can’t access domain-specific knowledge or up-to-date information, nor they can’t provide sources for the information they generate. And as we all now know, they tend to fabricate responses — a lot!

These flaws have made generative AI tools unsuitable for a variety of enterprise use cases.

Auquan built its Intelligence Engine on RAG because our financial services customers have knowledge-intensive use cases, such as ESG intelligence, KYC, and company pre-screening. All of these require processing large volumes of unstructured data pulled from domain-specific sources, such as company-produced statements, biodiversity and deforestation data, regulatory and legal documents, supplier information, and local media coverage.

And Auquan’s customers have very high expectations when it comes to the accuracy, credibility, and trustworthiness of the insights and analysis they rely upon, and having RAG under Auquan’s hood makes it possible for us to meet and exceed them.

Based on our experience building our product using RAG, we expect the quality of ChatGPT to improve considerably. But those exploring generative AI for enterprise use cases should recognize that it’s still a general purpose AI tool. And a very useful one at that!

There are some big differences between using a general purpose RAG-based generative AI tool like ChatGPT for enterprise use cases and a domain-specific RAG-based solution like Auquan including:

We knew we made the right call by building on RAG, and the success our customers have achieved with Auquan, combined with our ability to innovate fast, is all of the evidence we need! But it’s nice to see companies like OpenAI make the same decision, and it’s further validation that this is where the industry is moving.

Each day we spotlight under-the-radar investment themes and idiosyncratic risks pulled from our intelligence engine, often involving emerging markets, supply chain issues, ESG risks, and the impact of regulatory changes.

.png?height=200&name=Untitled%20design%20(2).png)

Microsoft has featured Auquan in its latest Azure OpenAI customer stories, highlighting how our...

.jpg?height=200&name=Image%20Cards%20for%20Blog%20Posts%20and%20Social%20Media%20(10).jpg)

We’re delighted to welcome Emily Penell to Auquan as part of our growing Operations team! Based in...

.jpg?height=200&name=Image%20Cards%20for%20Blog%20Posts%20and%20Social%20Media%20(4).jpg)

According to Gartner's report, over 40% of organizations in banking and investment services will...

15 minutes to see what’s possible when manual work disappears.

Interested in working at Auquan? Click here